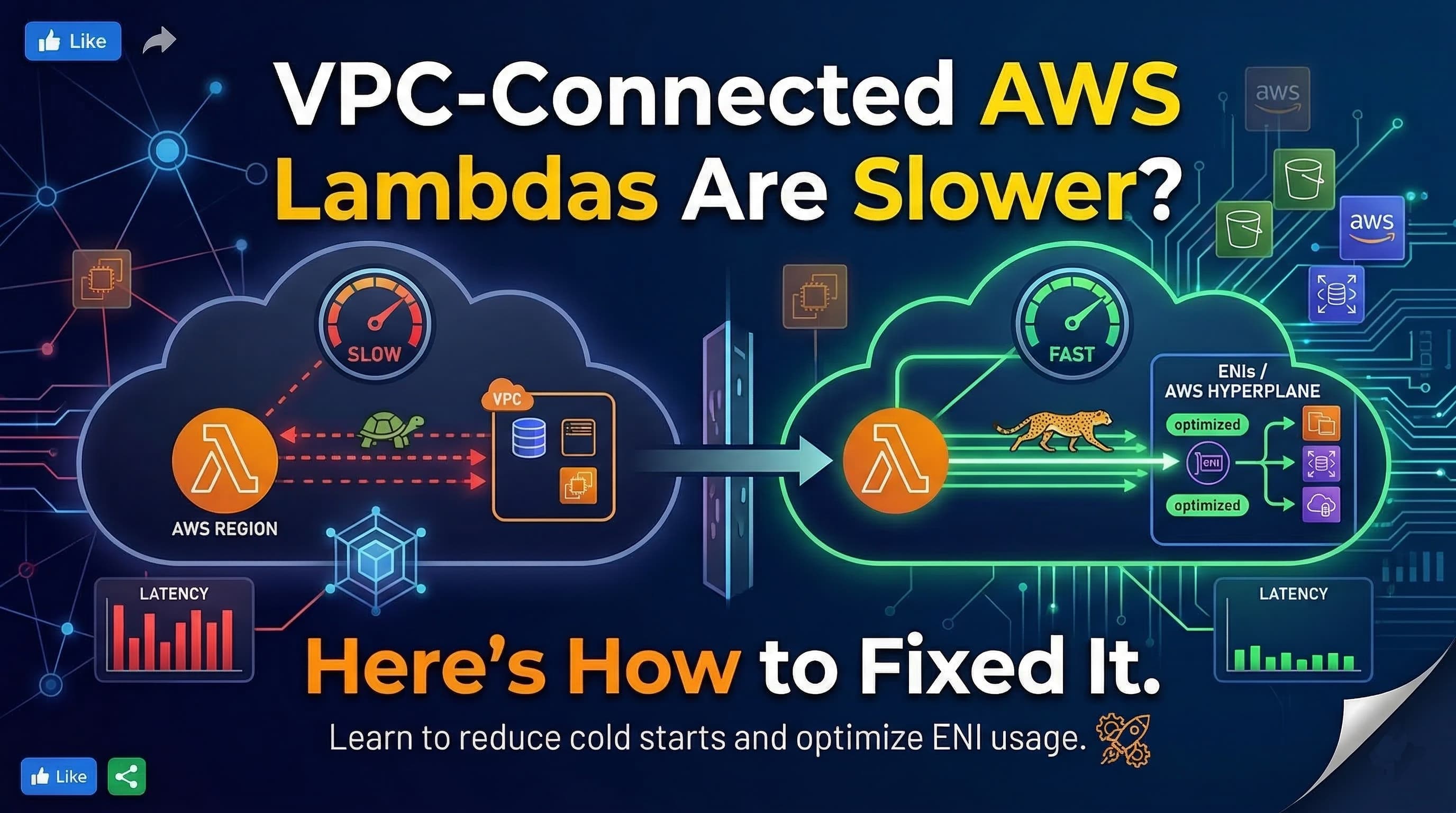

VPC-Connected AWS Lambdas Are Slower ? Here’s How to Fixed It

Cloud Engineer | AWS Community Builder

Serverless computing with AWS Lambda is one of the most powerful ways to run code in the cloud without managing servers. But when a Lambda function is placed inside a VPC (Virtual Private Cloud) to connect to services like Amazon RDS, ElastiCache, or private APIs, many developers notice one frustrating change:

⚠️ Cold starts become slower historically increasing from ~100 ms to nearly 1 second.

This slowdown has long been considered one of the trade-offs of using Lambda with VPCs. But how bad is it today? Does AWS still suffer from large cold start delays inside a VPC?

To find out, we ran a real-world experiment comparing cold starts of the same Lambda function inside a VPC and outside a VPC, measured execution times, and explored why these differences happen and how to fix them.

Q )Why Lambda Cold Starts Can Be Slower in a VPC ?

A “cold start” occurs when AWS spins up a new execution environment for your Lambda function. This usually happens when the function hasn’t been invoked for a while or when scaling up to handle more requests.

When a Lambda function runs outside a VPC, AWS handles networking internally, so the container can start almost immediately.

When it’s placed inside a VPC, however, Lambda must first:

Create and attach an Elastic Network Interface (ENI) to your VPC subnet

Assign private IP addresses

Apply security groups

This ENI setup adds latency to the cold start. Historically, this could add 600–1200 ms to initialization.

AWS has since improved this process with Hyperplane ENIs, which reuse network interfaces more efficiently but the only way to know the real impact is to measure it yourself.

Step 1 : Writing a Sample Lambda Function

Let’s create a simple Python Lambda function to measure cold start time and container reuse.

import time

import datetime

# This runs once when the container is initialized (cold start)

INIT_TIME = time.time()

def lambda_handler(event, context):

start = time.time()

# Measure how long the container has been alive

uptime_since_init = start - INIT_TIME

# Simulate a small workload

time.sleep(0.1)

end = time.time()

execution_duration = end - start

return {

"statusCode": 200,

"timestamp": datetime.datetime.utcnow().isoformat() + "Z",

"execution_duration": f"{execution_duration:.4f} seconds",

"uptime_since_init": f"{uptime_since_init:.4f} seconds",

"message": "Hello from Lambda!"

}

What the Code Does ?

INIT_TIME is set once when the Lambda container starts , this is how we detect cold starts.

uptime_since_init tells us how long the container has been alive:

Near 0 → cold start

Large number → warm start

execution_duration measures how long the function itself takes to execute.

We also add a small time.sleep(0.1) to simulate a minimal workload.

Step 2 : Deploy Two Versions of the Lambda

To compare performance:

Lambda A : Outside VPC: Create a Lambda and leave the VPC setting as “No VPC.”

Lambda B : Inside VPC: Create another Lambda in a private subnet of a VPC.

Both functions used 128 MB memory and the same code above.

Step 3 : Invoke Both Functions 10 Times

Invoke each function 10 times at 30-second intervals. We focus on the first invocation (cold start) and subsequent ones (warm starts).

Results : Outside VPC

First invocation (cold start):

Init Duration: 114.98 ms

execution_duration: ~0.1001 seconds

uptime_since_init: ~0.0058 seconds

Subsequent invocations (warm):

Init Duration: — (not shown)

execution_duration: ~0.1001 seconds

uptime_since_init: increasing

Outside a VPC, cold starts were about 115 ms, and warm invocations stayed around 102 ms consistently

Results : Inside VPC.

First invocation (cold start):

Init Duration: 820 ms

execution_duration: ~0.100 s

uptime_since_init: ~0.006 s

Subsequent invocations (warm):

Init Duration: — (not shown)

execution_duration: ~0.100 s

uptime_since_init: increasing

Inside a VPC, cold starts were about 820 ms, and warm invocations stayed around 102 ms consistently

Side-by-Side Comparison

| Environment | Cold Start (Init Duration) | Warm Execution Time |

|---|---|---|

| Outside VPC | ~115 ms | ~102 ms |

| Inside VPC | ~802 ms | ~102 ms |

Observation

Lambda functions outside a VPC start up nearly 7× faster during cold starts. Once warm, both perform about the same but that cold start latency can significantly impact real-world applications.

Q)Why the Gap Exists ?

The big gap is almost entirely due to ENI setup inside a VPC. AWS must create and attach a network interface before the function can run. That adds several hundred milliseconds of delay especially if the Lambda has been idle or deployed to a new subnet.

Even with AWS’s Hyperplane ENIs and reuse improvements, ENI creation still happens under certain conditions (e.g. first invocation, long idle times), and you’ll feel that delay.

Step 4 : Reducing Cold Start Latency Even More

If your Lambda must run inside a VPC (for example, to reach a database), here’s how to reduce the impact:

- Enable Provisioned Concurrency

Provisioned concurrency keeps execution environments warm and ready:

aws lambda put-provisioned-concurrency-config

--function-name MyVpcLambda

--qualifier prod

--provisioned-concurrent-executions 5

This eliminates most cold start delays, even inside a VPC.

2. Use VPC Endpoints

If your Lambda calls other AWS services (e.g., S3, DynamoDB), create VPC endpoints to avoid NAT Gateway latency:

3. Increase Memory Size

Higher memory allocation gives your Lambda more CPU power, reducing cold start times. Even increasing from 128 MB → 512 MB can cut cold start time by 30–40%.

Key Takeaways

Cold starts happen when AWS spins up a new container for your Lambda.

Lambdas outside a VPC are significantly faster (~100–150 ms) because they don’t require ENI setup.

Lambdas inside a VPC can be 5–8× slower (~600–1200 ms) during cold starts due to ENI creation.

Improvements like Hyperplane ENIs help, but they don’t eliminate the problem.

Provisioned Concurrency, VPC Endpoints, and memory tuning can help reduce latency inside a VPC.

Conclusion

If low latency is critical, keeping Lambda functions outside a VPC is the best choice. They start faster, respond more quickly, and avoid the overhead of ENI setup. Running Lambda inside a VPC is necessary only when connecting to private resources but you should expect and plan for slower cold starts in that scenario. Even with AWS’s improvements, ENI creation can still add hundreds of milliseconds to startup time.